|

I've been thinking about turning 26 for several years now.

To any non-Americans reading this, that might sound a bit strange. After all, 26 might be an age that many people start to experience their first aches and pains, but it's also an age that people still laugh at you when you try to complain about said aches and pains. And yet, what should be nothing more than another formal reminder that I'm aging is something else entirely. It's the age I lose my parent's health insurance. I've already had a few tastes of this reality (a recent trip to the dentist required me to pay out of pocket, although my dental coverage is so bad this wasn't much more than I expected), but 26 is the age when things become official. As I'm a freelancer, I'm now faced with a dilemma. Do I pay an absurdly high monthly payment for a plan that covers next to nothing? Or do I eat my vegetables, take a multivitamin, and hope that I don't get sick? To understand why being uninsured and underinsured is a reality for myself and tens of millions of other Americans, I decided to crack into the topic a bit. A Brief Look at the History of Healthcare in America

The idea that institutions like governments or businesses should provide health insurance to people was a novel idea in the 20th century. So novel, in fact, that by 1920, only 16 European countries had such a system.

Around that time, the demand for health insurance was low. Medical technology was rudimentary at best, and because of that, the public didn't trust it. Most sick people received treatment at home instead of at local hospitals. However, as medical technology advanced, the idea of receiving professional medical care became more accepted. Whereas a hospital visit might have been a death sentence in the past, people started to associate doctors with saving lives instead of taking them. At the same time, urban houses were becoming smaller and smaller. Households that had the space to cater to sick family members before now struggled to do so. Receiving medical treatment outside of the house became the only option for many.

In 1929, Blue Cross launched the first insurance plan in the United States. This covered 21 days' worth of hospitalization at a fixed rate of $6 per year, providing people with a safety net in case of emergency.

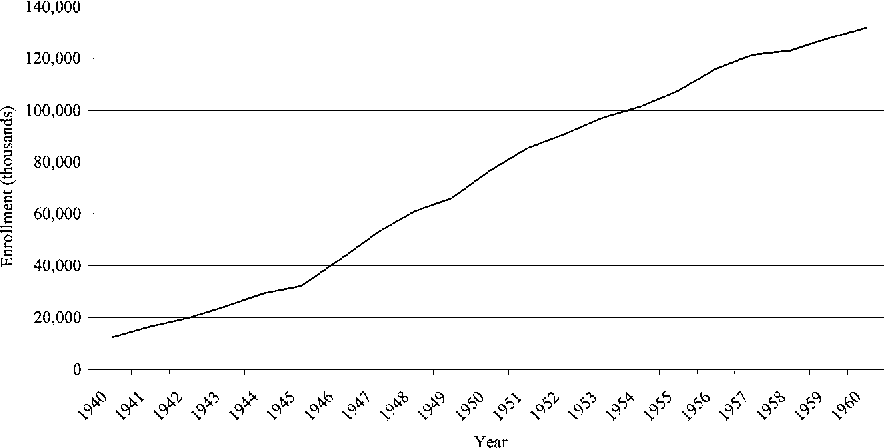

While health insurance enrollment started out slow, insurance plans gained popularity over the next couple of decades. By the 40s and 50s, enrollment began to skyrocket.

Businesses began to understand that offering health insurance was a great way to entice people to work with them. Over the next few decades, the relationship between employment and health insurance grew stronger.

It wasn't until 2010 that the U.S. finally expanded healthcare coverage under the Affordable Care Act. While the ACA helped tens of millions of Americans (including myself), it's faced steep opposition ever since its conception. The Supreme Court shot down the most recent attempt to overturn the ACA in June of this year. Get a Job!

On paper, working for healthcare doesn't sound like too bad of a deal. You can argue that healthcare is a human right and that it's cruel to deny it from people, but provided you can find a full-time job, you should have no problem getting the coverage you need.

Of course, the reality is quite different. Many Americans (myself included) can't find jobs that offer healthcare. Often, this isn't because the jobs we end up taking are for unskilled workers or high schoolers. It's because businesses know that providing health care and other benefits to employees will affect their bottom line. Companies like Uber and Amazon have made headlines in recent months for their crackdown on workers attempting to unionize. Besides demanding a livable wage and enough time to use the bathroom, many workers are also fighting for healthcare. Businesses hire people as "gig-workers" or freelancers because they know that doing so gets them out of having to support them. As of 2017, gig workers made up 43% of the U.S. workforce. Today, around half of all American workers are freelancers.

On top of that, many companies also classify the workers they hire as part-time. Even if an employee ends up working the equivalent of a full-time workweek, keeping them around as a part-time employee prevents the company from having to offer healthcare and other benefits.

A private healthcare system doesn't work when you have businesses doing everything in their power to avoid having to provide insurance. The Big Pharma Problem

While it's bad enough that people have to live without health insurance, Big Pharma makes the situation even more difficult.

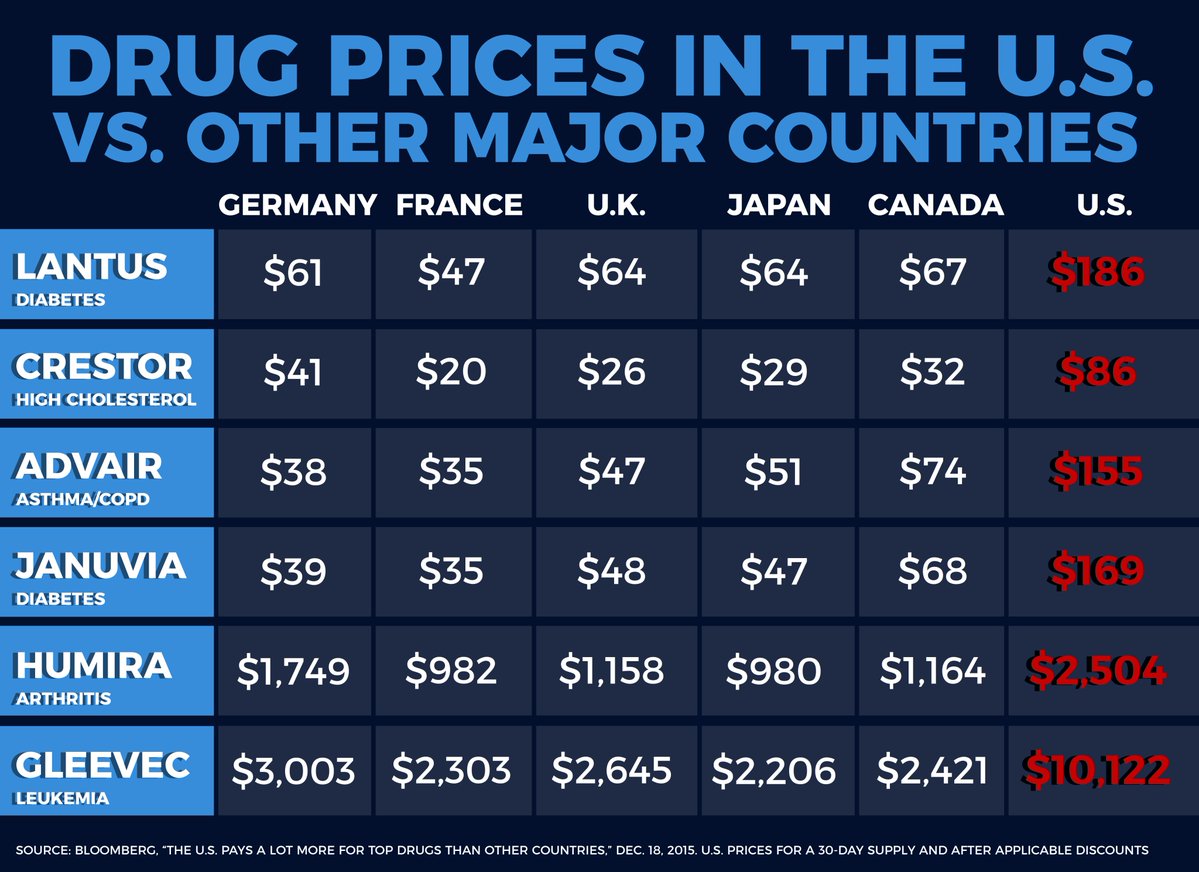

As its insidious-sounding name connotes, Big Pharma refers to the global pharmaceutical industry and, in recent years, how a group of companies holds the health and wellbeing of the world in their hands. Through patents and other legalities, companies are able to charge high prices for drugs that people need to survive. This includes everything from Humira, which helps treat arthritis, to Lantus, which treats diabetes. As is the case with many parts of our healthcare system, this is another distinctly American problem. One quick look at drug prices can tell you that.

Pharmaceutical companies are self-aware enough to recognize that if the average American knew the extent of price gauging they commit, they'd be ruined. Because of that, they've perpetuated the idea that drug prices reflect medical breakthroughs.

Congresswoman Katie Porter has gone viral several times for using a whiteboard to confront CEOs and other business executives. In one particularly enjoyable exchange, Porter confronted the CEO of the biopharmaceutical company Abbvie. As Porter explains, only a fraction of the company's multi-billion dollar budget goes towards R&D. The vast majority of it goes to a group of people looking to enrich themselves and who are willing to deny others the medications they need to do so. Porter concludes:

Ever-increasing drug prices are a direct consequence of the private health care system in the United States. Through a lack of regulation, pharmaceutical companies have the power to take advantage of all Americans—both those with and without health insurance.

Where We Go From Here

As I've discussed in past articles, politicians and the media often make certain issues seem like divisive topics. Every time Medicare for All gets brought up on the news, we hear arguments from "both sides".

Someone on the Left claims that healthcare is a human right, and someone on the Right responds that universal healthcare is too expensive and that Americans don't want to spend their money paying for other people's healthcare. The panelists agree to disagree (or the debate spirals into something messy), and the host thanks them both. While these sorts of verbal boxing matches can be fun, they create the illusion that many people are still unsure how they feel towards certain issues, Medicare for All included.

The reality is that most Americans support Medicare for All and that this support continues to grow. I'd be willing to bet that if people knew more about universal healthcare and vile mouthpieces stopped using buzzwords like "socialism", even more people would be open to it.

Either way, having people against healthcare accessibility on the news is akin to inviting flat Earthers on air. The vast majority of people recognize that the planet is round, so why should we waste time giving a ludicrous minority a platform? Nearly 70% of Americans support Medicare for All in some way, shape, or form, and as mentioned, many of those who don't just lack an understanding of the topic. So why do we keep hearing that people who supposedly represent the will of the people are so vehemently against universal healthcare? The Arguments Against Medicare for All

Media pundits and politicians aside, it's important to recognize that many of those nervous about Medicare for All do come from places of sincerity. The reality is, however, that their fears are usually exaggerated, inaccurate, and come from people who do know better.

Let's take a look at a few of them. Medicare for All is Expensive

This is a common concern that everyone from ordinary people to so-called "liberal progressive socialist Marxist" presidents seem to share. People worry that a universal healthcare system like Medicare for All would cause the average American to pay more in taxes.

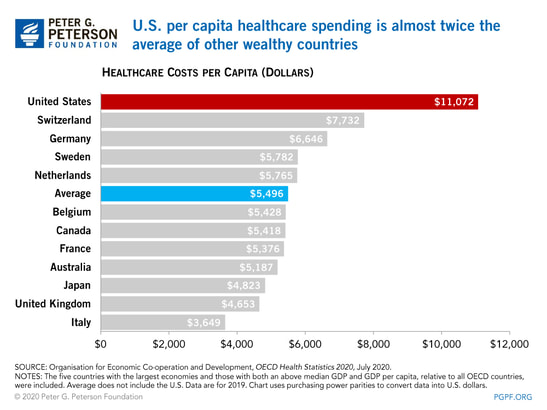

The unfortunate reality is that our current healthcare system is the most expensive in the developed world. Between private insurance premiums, co-pays, and other fees, the average U.S. citizen pays around twice the amount of what people in other countries pay.

But with higher costs comes better results, right? Not exactly.

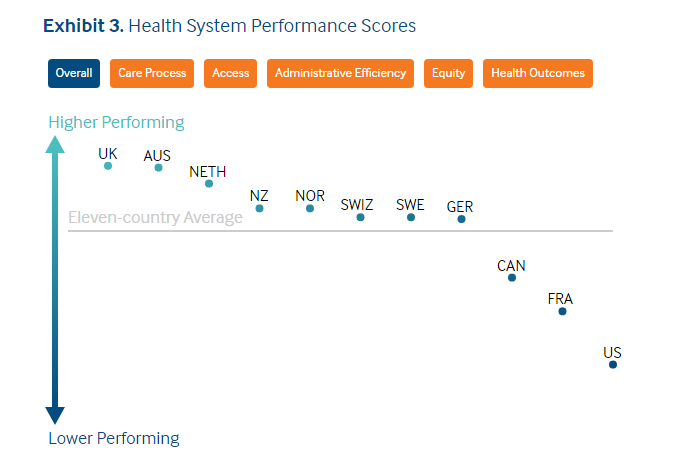

Besides having the highest costs in the developed world, the U.S. also ranks near the bottom of healthcare efficacy. Essentially, the average American citizen pays (much) more for less.

On account of its fractured healthcare system, the U.S. has a declining life expectancy, comparatively high levels of infant and maternal mortality rates, and high levels of medical error.

Medicare for All could help combat all of those issues while still lowering costs for the average person. Lengthy Wait Times

I've heard many people claim that universal healthcare causes wait times to increase. After all, if everyone can visit the doctor or the dentist without having to fork over thousands of dollars, won't it just make healthcare less accessible?

The irony of this fear is that wait times for American doctors are already long—especially when it comes to seeing certain medical professionals. If you haven't ever been to a dermatologist, try scheduling an appointment with one after finishing this article. Short of demanding that they check out that suspicious mole on your chest, there's a good chance you'll have to wait several weeks or even months to get in. According to data collected by the OECD, the U.S. ranks on the higher side for healthcare wait times. While some countries with universal healthcare like Canada do too, having to wait for healthcare is already a reality for many Americans. It's therefore not a valid argument against expanding coverage. Universal Healthcare Is Socialism

Decades of McCarthyism have caused socialism to become a buzzword used by people who, most of the time, don't know what it means. While I don't expect everyone to go and read socialist literature, I do hope that people can refrain from using Facebook memes as their source of information.

Socialism is an economic system where the means of production are in the hands of the people. Leftists have been arguing about the exact definition for centuries, but most would agree that creating an equitable society is the long-term goal. They'd also agree that the Soviet Union, Venezuela, and other commonly-cited examples of "socialist" countries are not worth emulating. It's worth noting that most European politicians, Bernie Sanders, and yes—even Alexandria Ocasio-Cortez, are not socialists. They're social democrats who support a watered-down, less-buzzwordy version of socialism that meshes with a free market system. While the United States remains a shining beacon of capitalism for the world, the reality is that our society has many socialist policies we take for granted. Here are just a few of them:

You'll notice that none of these institutions, laws, or policies are profit-motivated. In fact, they're often at odds with what greedy corporations and individuals would like, yet we keep them regardless since we know they improve people's lives. Medicare for All/universal healthcare/whatever you want to call it would do the same thing, so don't fall for the "it's socialism" line. Don't let people use their faulty understanding of an economic system as an excuse to end talks of giving people healthcare. It's Time for a Change

No matter what metric you look at, it's clear that our healthcare system needs a revamp. It's also clear that many of the arguments used to defend it in its current state don't hold up.

I don't want to live in a country where I and millions of others have to put our health on the line to avoid having to spend hundreds or thousands of dollars. I don't want to live in a country where private insurance companies and Big Pharma get to enrich themselves while real people suffer and die as a result. If the wealthiest country on Earth can't provide even the most basic of services to its citizens, it needs a reality check. If you enjoyed reading this article, take a moment to check out some of the other Study With Chandler posts. You can also support my writing which encourages me to do more!

0 Comments

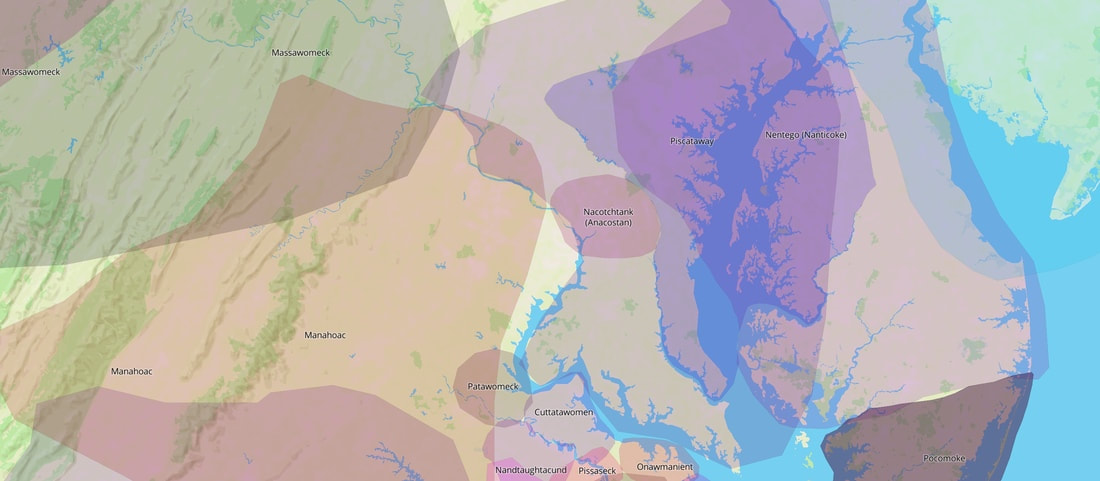

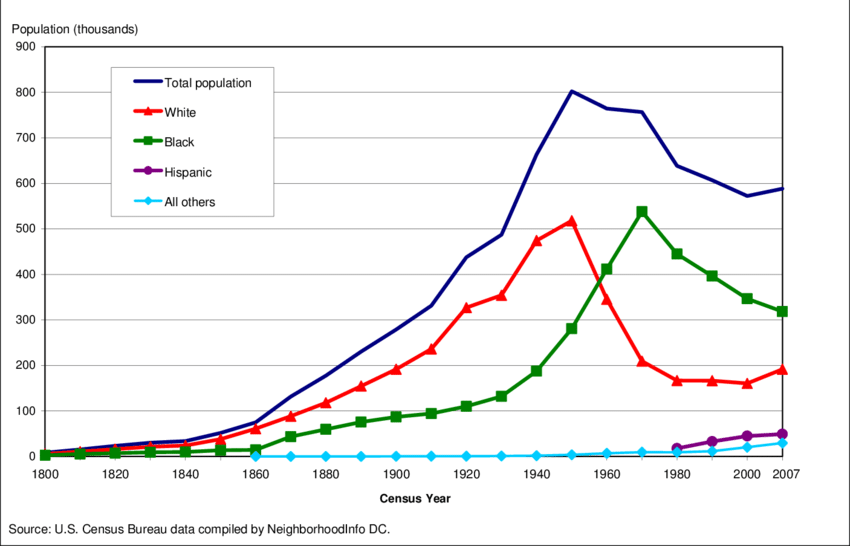

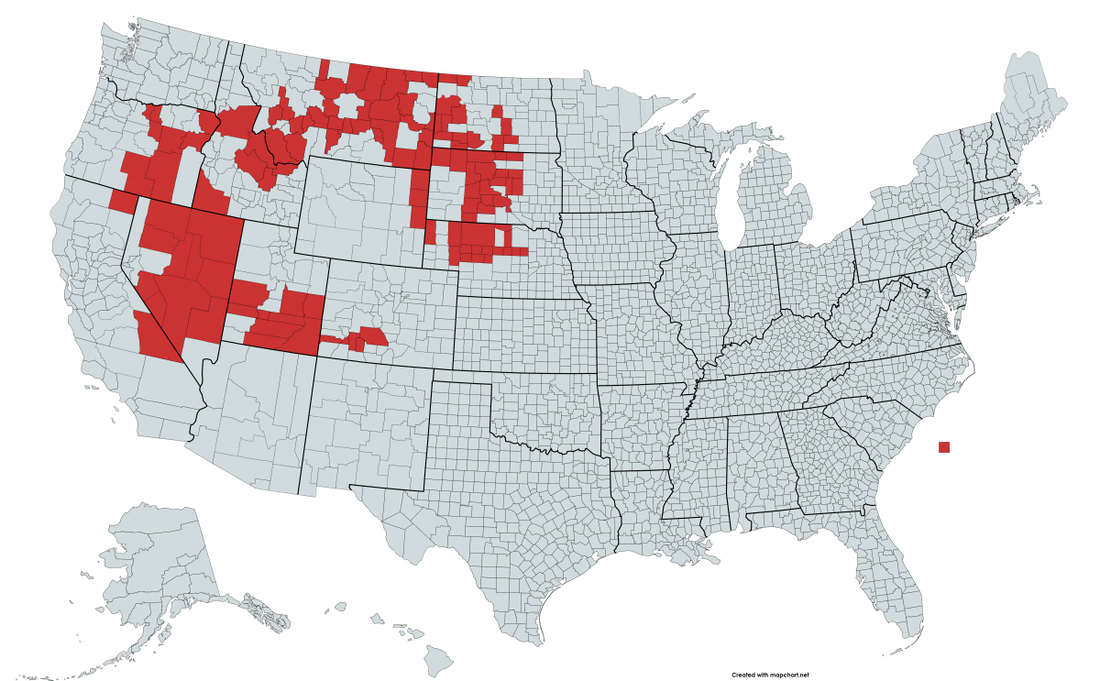

No taxation without representation! Today, American students across the country learn those words at a young age. They refer, of course, to the chief grievance that the Thirteen Colonies had towards Great Britain: taxes like the Stamp Act and the Tea Act were unconstitutional since colonists had no say in the British parliament. This idea of illegitimate taxation helped lead to the American Revolution and, ultimately, the founding of the United States. It's interesting that while that history lesson has become sensationalized, few people realize that today, several places in the country do indeed have taxation without representation. One of them is the nation's capital, Washington, D.C. In fact, in 2000, D.C. license plates even read "Taxation Without Representation." Since 2000, they've read "End Taxation Without Representation." But why is D.C. not a state? Should it become one? Let's find out. This Land Is *My* LandAs we should do anytime we look at U.S. history, let's remember that the English, French, and other colonial powers were not the first people to live in the Americas. Indigenous peoples occupied the continent for thousands of years before the first European caravel arrived. Some modern experts estimate that as many as 100 million people lived across North and South America before Christopher Columbus arrived. (For a great deep dive into the pre-Columbian Americas, I recommend 1491: New Revelations of the Americas Before Columbus by Charles C. Mann.) In the area that we today know as Washington, D.C., the Nacotchtank or Anacostan people lived and called it home. The region, abundant in natural resources, allowed them to create a thriving community that traded with places as far away as New York. The Nacotchtank people's first contact with Europeans was in 1608 with Captain John Smith from Jamestown. Although the first encounter was friendly, later interactions were not. Less than 40 years later, only a quarter of the original indigenous people remained in the area. Europeans killed or drove off the majority, while many of the remaining people died from diseases brought by the foreigners. A New Capital for a New CountryAfter displacing the remaining natives, the colonies of Maryland and Virginia absorbed the area of D.C., and it remained a part of them until 1790. In that year, the new American Congress passed the Residence Act. This allowed the United States to create a capital on the banks of the Potomac River. President George Washington himself chose the exact location and signed it into law. Virginia and Maryland donated land to help create the new federal district, one that measured in total no more than 100 square miles. The name of the new city honored the first president, while Columbia was a feminine and poetic version of Columbus common at the time. The Framers hoped that such a setup would prevent single states from becoming too powerful. An isolated district that housed the federal government could help keep the various parts of the country in check. One Step Forward, Many Steps BackYears later in 1865, the American Civil War ended and the country entered the Reconstruction era. For the first time in American history, the Constitution now (at least on paper) protected the rights of African Americans. Unsurprisingly, the transition from chattel slave to American citizen was anything but an easy, simple, or fair process. Freed slaves continued to face discrimination all across the country, especially in the South. President Andrew Johnson (who had been a former slaveholder himself) gave the former Confederate states the freedom to decide the rights of African Americans. As slavery was the principal cause of the Civil War, the southern states quickly made racial discrimination a priority. Given the city's location, African American residents of D.C. (who made up around one-third of the population) suffered heavily during this era. Black residents that managed to obtain local political positions were removed from power less than a decade later. Many white politicians weren't shy about why they were passing these restrictions. John Tyler Morgan, a U.S. Senator, said that Congress needed to "burn down the barn to get rid of the rats...the rats being the negro population and the barn being the government of the District of Columbia." These sorts of "Jim Crow Laws" became codified into the U.S. legal system, which they remained a part of until the latter half of the 20th century. A String of Hard-Fought Victories (Plus More Setbacks)For the next century, Washington D.C. voting restrictions remained in effect as the city's demographics evolved. By the late 1950s, D.C. was the first predominately Black city in the country. Around that time, years of activism and nonviolent protests led to the passing of the Civil Rights Act in 1964. For the first time in history, D.C. residents could vote in presidential elections. That year, they voted overwhelmingly to support Lyndon B. Johnson, the sitting president who had pushed for the Civil Rights Act. The city also gained three electors to cast votes in the Electoral College. On top of that, in 1973, the Home Rule Act gave D.C. residents the right to elect their city council and mayor. Yet while these victories were big, they were not without limitations. The Civil Rights Act set the number of electors to a fixed number: three. If the city one day grows into a megacity with millions of people, its electoral power will remain the same—at least in the current legal framework. The federal government also heavily intervened in local elections and policy early on. Many members of Congress doubted whether a predominantly Black city could govern itself. The Situation TodayOver the past few decades, D.C. residents have continued to demand the rights that other U.S. citizens enjoy. Now, with the Democrats in control of both the White House and Congress, residents are hopeful that they might finally get the political representation they deserve. They believe that the best way to do this is by granting the District statehood. In April of this year, a bill granting Washington D.C. statehood passed through the House. Although the bill would benefit more than 700,000 people, it's unlikely that it will survive the filibuster and pass through the Senate. Proponents of the bill argue that while D.C. has seen its rights expanded, it's still not enough. The District needs congressional representation—namely in the form of senators and representatives (ones who hold actual legislative power). Those against D.C. statehood cite two main arguments. The first is that a city of 68 square miles shouldn't become a state. Yet while that argument might sound valid when looking at a geographic map, it doesn't hold up when looking at one that shows population. A population of 700,000 might not sound huge, but it's larger than the populations of both Vermont and Wyoming. But regardless —whether those people are spread out across a massive state or reside in a tiny urban area, don't they deserve the same rights as the rest of the country? The second argument is that the Residence Act is clear: D.C. needs to be a separate federal district, independent from the states it ties together. This type of originalist thinking might seem strong, but again, it falls apart with even a quick examination. It's true that the Constitution demands a federal district no larger than 100 square miles. But what about the land outside of that area? The area that houses government buildings and monuments could easily remain under federal control. At the same time, the other parts of the District could become a state—one that has the same rights as the other 50 states in the Union. 51: An Odd Number, But the Right NumberWhile tossing out our old flags to buy updated ones might sound strange, it's a sacrifice we should be willing to make. Having a say in the democratic process is the epitome of what it means to be an American. No U.S. citizens should be denied that fundamental right—no matter where they live.

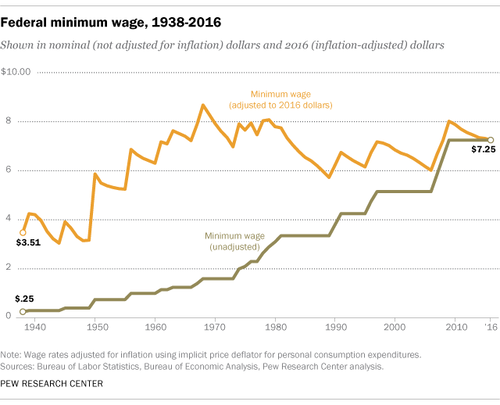

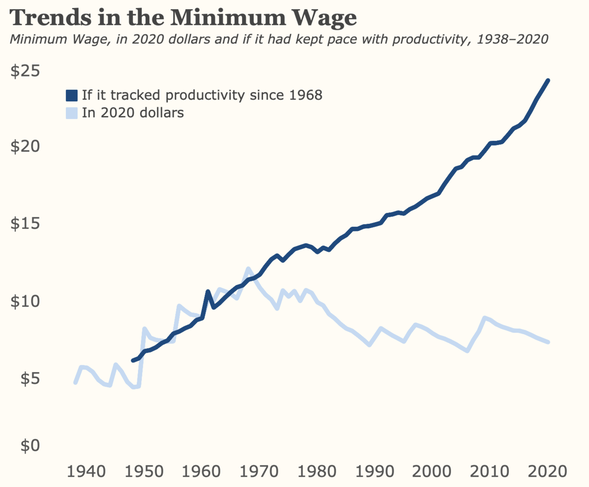

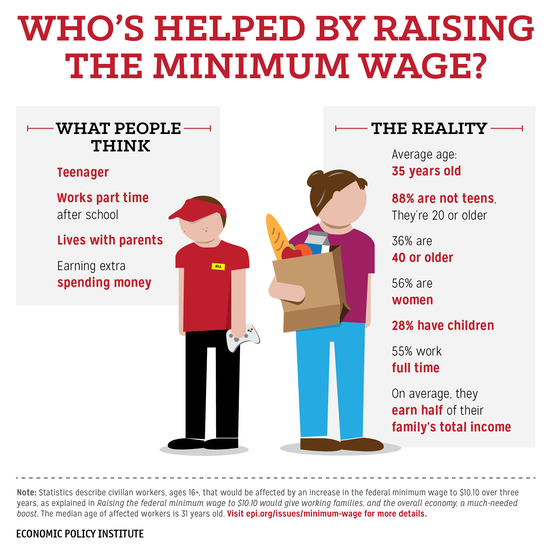

For the United States to function as a democracy, it needs to treat all its citizens fairly. Granting Washington D.C. statehood is one step that can help the country get closer to achieving that goal. Make sure to leave me your thoughts, feelings, and hate messages in the comments below. For more deep dives into the American past, check out the other content that Study With Chandler offers. Politicians and mainstream media have a tendency to make issues with widespread public support seem contentious. Background checks on guns, the legalization of marijuana, and paid maternity leave are some of those issues. Yet while all of those do indeed have the support of most Americans, today we're going to be focusing on something else entirely—the $15 minimum wage. According to a recent survey conducted by Pew Research, around six-in-ten Americans support a $15 federal minimum wage. Specifically, it has the support of 87% of Democrats and 28% of Republicans. But is $15 enough, or is it too much? Won't increasing the minimum wage lead to massive amounts of inflation? Will hiking it up too far lead to the collapse of Western civilization as we know it? To answer those sorts of questions, we're going to look at history, facts, and evidence to reach a conclusion. Let's get started! Gilded OriginsTowards the end of the 19th century, the United States was suffering through the Gilded Age. Wealth inequality reached record levels, and industrialists employed men, women, and children in brutal conditions across the country. In response, labor movements began fighting for workers' rights. With the dawn of the 20th century and the Progressive Age, groups began to fight for policies that we now take for granted. These include things like child labor laws, the five-day workweek, and of course, a minimum wage. In 1912, Massachusetts was the first state to pass minimum wage laws, and in 1938, the Fair Labor Standards Act set the federal minimum wage to $.25 an hour (or around $4.55 today). Over the next hundred years, the minimum wage climbed every several years. You can see its growth on the darker line on the chart below. Today, the minimum wage is at $7.25. Many states across the country have increased it, but the last time the federal government did was in 2009. The Inflation IssueAt first glance, the graph above looks great. You can see that there is consistent, measurable growth over the 20th century. However, problems become apparent when we factor in inflation. As any person over the age of 65 can tell you, a dollar (or even a quarter) could get you quite a bit years ago. Today, you're lucky to get a pack of gum with such small amounts of money. That's because of inflation: when your purchasing power declines over a period of time. In a perfect world, our wages would increase consistently with the cost of living. This would negate the damaging effects of inflation and ensure that one day, you too tell your grandchildren about how far a dollar could take you 50 years ago. However, if you look back at that graph, you'll notice that there's an orange line above the darker one. That represents the actual value of the minimum wage after taking inflation into account. From looking at that line, you can see that the inflation-adjusted amount has remained more or less the same for 50 years. You can also see that minimum wage workers in the 60s had greater purchasing power than those today. The Productivity ProblemUnfortunately, inflation isn't the only factor that we have to take into account. We also have to consider worker productivity. One of the pillars of most economic systems is the idea that hard work deserves reward (or at the very least, adequate compensation). The easiest way to measure "hard work" is by looking at worker productivity. And, when you look at worker productivity over the course of the past century, you find that it's increased dramatically. Yet, as the chart below shows, the minimum wage has not only failed to keep up with productivity—it's fallen far behind. You'll notice that the difference between these two figures isn't off by a small amount. In fact, after factoring in productivity, minimum wage workers today should be making more than $24 an hour! Fargo vs. New York CityNow, if you're a small business owner who operates in rural America, you might hear "$24 minimum wage" and think it's insanity. And to some extent, that's valid. One important distinction that people need to make is how local economies differ across the country. A one-bedroom apartment in New York City will cost you around $3,000, while a similar apartment in Fargo, North Dakota, will cost you around $800. Wages reflect this. However, that doesn't mean that the minimum wage shouldn't increase. As these figures show, it needs to go up somewhat across the entire country. In certain places with higher costs of living, $15 might not even be enough. It's also important to point out that the majority of minimum wage workers work in urban environments. They're not working in places like Fargo—they're working in areas like Queens and the Bronx. Policymakers need to avoid a one-size-fits-all model. However, we also need to make sure that workers make the money they deserve. No index justifies why the minimum wage is as low as it is today. Inflation 2.0Many people worry that if the government increases the minimum wage, it will accelerate price inflation. While this is a valid concern, it's unfortunately become a talking point that people use to shut down discussion of even moderate increases to the minimum wage. Remember that all proposals have "a downside". Funding public education is expensive. Holding corporations responsible for environmental damage is difficult. Medicare For All might cause some people to lose their jobs. The key is not to let these potential problems bog us down, but instead, figure out how to solve them. The fact is that to some extent, yes—the prices of certain goods can increase when you have more money in circulation. But, governments have ways to offset that. That's why we have institutions like the Federal Reserve. It's also clear that people having more money isn't a bad thing. When people have more disposable income, they spend it. That money then ultimately makes its way back into businesses, in turn, creating more jobs. Of course, this process doesn't happen overnight. But most economists realize that and propose gradual increases to the minimum wage. The reason people are demanding $15 now is because there hasn't been an increase in 11 years. As we saw, it also hasn't kept consistent with inflation or productivity. And remember, inflation happens either way! The Million (or Fifteen) Dollar Question: What Is the Minimum Wage for?Another common argument is that the $15 minimum wage isn't meant to be a wage that people use to survive. Many see it as something that high school students and other temporary workers use to get some money on the side. Many minimum wage workers are indeed between the ages of 16-24. However, like the inflation argument, too many people use that fact to shut down talks of raising it at all. 55% of minimum-wage earners work full-time, using the money they earn to support their families. The vast majority are not in high school, and many work to support children or other family members. Let's also not forget the origins of the minimum wage—workers that fought for years for better working conditions and pay that doesn't reduce them to indentured servants. The original labor unions certainly weren't fighting for "spending money". A Change of HeartI remember sharing something on Facebook years ago that talked disparagingly of people fighting for the $15 minimum wage. I remember thinking, "Why not $100 an hour, or $1000?" Since sharing that post, I learned that economists and policymakers don't choose some arbitrary number to be the minimum wage. It comes out of several different variables, like inflation and productivity. As for whether or not someone "deserves the money", I would encourage anyone who thinks it's easy to stand on their feet for eight hours and deal with angry customers to work a week at Wendy's or Walmart. I worked as a dishwasher for a few months in high school, and it was one of the most challenging jobs (physically and mentally) I ever had. It also surprised me to learn that the average minimum wage worker isn't a teenager working as a dishwasher on the weekend but rather a middle-aged woman working full-time to support her family. My current feelings towards increasing the minimum wage? The concerns that people have about raising it are valid. At the same time, we shouldn't use them as an excuse to close the discussion. Especially when the evidence shows that an increase is long overdue. The $15 Minimum Wage: Where We Go From HereLike many other issues of our time, the $15 minimum wage has become a politicized topic towards which people on both sides feel strongly.

Yet to some extent, I don't think that it needs to be. It's clear that the minimum wage does need to go up around the country. Exactly how much the government needs to increase it depends on the area and cost of living, and that's something policy experts should consider. Now that we've looked at some facts and the history behind it, I'm interested in knowing your thoughts about increasing the minimum wage. Leave a comment down below and let me know what you think about it. Study With Chandler is young but growing fast. For more great content, make sure to check out the rest of the site. |

by Chandler WebsterArchivesCategories |

RSS Feed

RSS Feed